In the early 1900s, a young Indian mathematician named Srinivasa Ramanujan sent a letter filled with strange formulas to G.H. Hardy, a renowned mathematician at Cambridge. Hardy, initially skeptical, soon realized that the sender was no ordinary man—Ramanujan had uncovered deep mathematical truths without any formal training. One of Ramanujan’s most astonishing ideas was about partitions—the number of ways a number can be broken into smaller parts. Ramanujan developed a formula to predict the number of partitions with remarkable accuracy, almost as if by magic. But he didn’t have a proof—just his instinct.

Hardy was intrigued but cautious. He rigorously examined Ramanujan’s formula. After months of hard work, he finally proved it correct. This moment was a turning point—it showed the world that Ramanujan’s intuition was not just luck. He had an extraordinary way of seeing patterns that others missed, but he needed someone like Hardy to validate and explain them to the world.

AI’s Intuition Without Proof

Today, Artificial Intelligence (AI) is much like Ramanujan. It generates solutions, predicts outcomes, and offers insights—but often without explaining how it arrived at them. AI itself doesn't "know" how it got there because the patterns it learned from training data are stored in a probabilistic vector representation rather than explicit deterministic rules.

LLMs are a lossy compression of the internet.

— Andrej Karpathy

In fact, the chain of thought we see in reasoning models is often a post-hoc rationalization rather than a true derivation of the solution—formulated to appear logical rather than derived through a rigorous deductive process. There are two approaches to solve this:

- In the long term, AI companies are likely to build interpretable (explainable) AI that explains its reasoning through deductive steps, similar to math steps or programming code, making its derivations transparent. Neurosymbolic AI could generate deterministic code or rules that humans can review and execute consistently, unlike LLMs that produce different answers for different users.

- In the short term, we need human reasoners who validate AI-generated insights, much like Hardy did for Ramanujan. They can analyze AI’s solutions, refine its ideas, and prove its intuition with deductive reasoning. Even in the long term, we might still need reasoners to peer review scientific discoveries made by interpretable AI, to ensure that AI’s intuition is grounded in deduction—especially in critical areas like cancer research, defence and nuclear fission. Human reasoners will remain essential for safe AI alignment and to prevent hallucination in high-stakes domains.

Impact on the Labor Market

Historically, the job market was divided into two categories:

- Blue-collar jobs — e.g. users who operate software to complete their work.

- White-collar jobs — e.g. engineers who build that software for users.

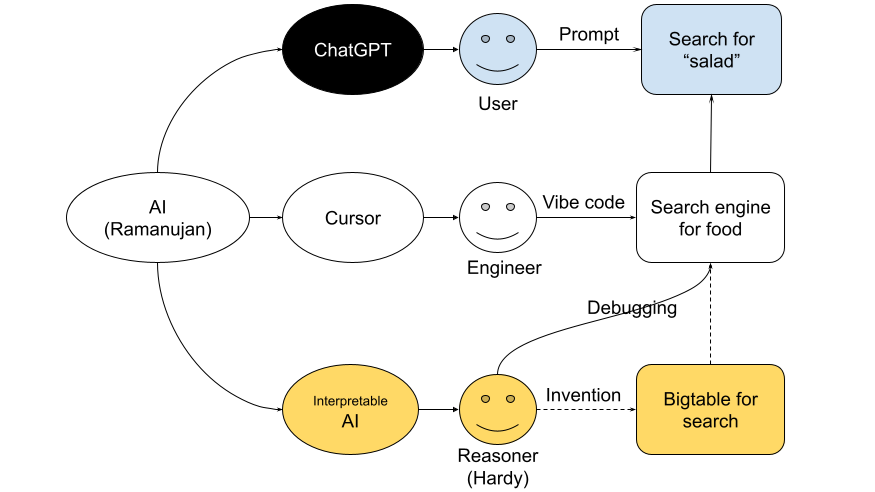

AI is now creating a new category: reasoners—those who understand how AI generated a software and can intervene when AI cannot fully comprehend changing requirements. I recently, experienced this while searching for healthy food:

- As a user: I asked ChatGPT to find a healthy "protein salad" I could buy in San Francisco and to rank them by NutriScore. It found relevant salads, but made up the NutriScores: the first salad was rated “A” (healthy), the second “B,” and the third “C” (not so healthy)—without showing how these scores were derived. It was like a school student writing answers in an exam without showing the steps. So, I had to act like a teacher, explaining how to calculate the NutriScore.

- As an engineer: I wanted to build a search engine for healthy food instead of explaining NutriScore calculation to ChatGPT every time. But when I tried to vibe-code it using Cursor, it failed to classify the food correctly. NutriScore is calculated differently for each food category, such as vegetables vs. meat. This required an AI pipeline: first, classify the food; then extract nutrient data from the webpage; if nutrients are missing, generate them based on the ingredients; and finally, calculate the score based on category and nutrients.

- As a reasoner: A vibe coder can build a CRUD app today. But building or debugging an AI pipeline requires deeper understanding. For Neartail’s food search, we needed reasoners, not just vibe coders. This doesn’t stop with applications—Google started as a search engine, but to index the web, they built Bigtable, challenged the CAP theorem, and created Firestore, which we now use as a simple JavaScript library. That's how we ended up creating our own finetuning tool to build AI models like the NutriScore calculator.

With AI, reasoners can create a self-reinforcing loop of discovery: the more complex problems they try to solve, the more they can brainstrom with AI and solve fundamental problems like CAP theorem.

Summary

The good news is that many engineers with deep interest will become reasoners with the help of AI. The bad news is that white-collar jobs once seen as intellectually demanding—like coding—will become so automated that they risk devolving into routine, paper-pushing roles. Many in these roles may one day speak of the “good old days” of meaningful work, much like blue-collar workers today. So, there is no stable middle ground. Either we evolve into a reasoner, leveraging AI for intellectual discovery, or we risk becoming obsolete—a paper pusher in the age of AI. If AI is Ramanujan, the Hardys of the world must rise—or risk being replaced by the very machine we helped create.

7 likes

1 comments

Like

Mar 26, 2025

Great content and made us to think where we are heading.

Add your comment